Career Profile

Technology leader with 30+ years bridging enterprise architecture, AI/ML, and cloud platforms. Career spans engineering leadership (IT Director, Development Manager), enterprise architecture (statewide technology standards for North Carolina state government), and hands-on platform engineering (AWS, EKS, production ML models). Security and compliance depth includes passing IRS SafeGuard audits, leading SOC 1/SOC 2 compliance across eight simultaneous audits, and serving on a Congressional subcommittee (ETAAC) reporting to Congress on technology and security policy. Currently completing an Executive MBA at UNC Wilmington, deliberately complementing technical depth with business strategy and organizational leadership.

Education

Pursuing an Executive MBA with a focus on Corporate Entrepreneurship, deliberately complementing 30+ years of technical depth with business strategy, finance, and organizational leadership. Applying coursework directly to technology adoption decisions, ROI analysis for platform investments, and organizational change management for AI/ML initiatives. EMBA Candidate — Expected Graduation: Spring 2027

Completed a five-year graduate program while working full-time — a deliberate investment in AI/ML depth that required sustained discipline across evenings, weekends, and years of compressed personal time. Specialization in Interactive Intelligence with coursework spanning machine learning, perception systems, natural language processing, computer vision, and knowledge-based AI. A cross-domain research collaboration with a PhD candidate in sociolinguistics produced a peer-reviewed publication in the Nordic Journal of Linguistics (2021) and a conference presentation at NWAV 46 — demonstrating how applied CS and ML skills serve as a force multiplier for domain experts in other fields.

The most significant outcome was the research collaboration with Nathan J. Young, a PhD candidate in sociolinguistics. Young had built phonetic dictionaries for Swedish and Danish but lacked the software engineering capability to operationalize them. I generalized the FAVE forced alignment toolkit to handle non-English language features through a configuration-driven architecture, built the processing pipeline, and created a web interface for the research community. The result: a CS engineer publishing in a linguistics journal because the cross-domain bridge created research capability that neither discipline could achieve alone.

This pattern — bringing engineering rigor and platform thinking to accelerate domain experts — is the same model that drives successful AI/ML organizations. Data scientists, researchers, and business analysts have domain knowledge; platform engineers build the infrastructure that makes that knowledge operational. The Georgia Tech research was an early proof point for a career spent bridging those disciplines.

- NWAV 46 Conference Presentation “155. Introducing NordFA:Forced alignment of Nordic languages. Nathan J. Young & Michael McGarrah”

- Abstract: Forced alignment for Nordic languages: Rapidly constructing a high-quality prototype. (PDF)

- LG-FAVE Software Repository

Completed a Bachelor of Science in Computer Science graduating with Honors while working full-time and raising a family.

- Member Association for Computing Machinery (ACM)

- Member Institute of Electrical and Electronics Engineers (IEEE)

- Member Association of Information Technology Professionals (AITP) (archive)

- Upsilon Pi Epsilon (UPE): International Computer Science honor society

Experiences

Leading cloud platform architecture across 20+ AWS accounts spanning every billing and data platform in the enterprise. Recovered a critical cross-enterprise data engineering project under pressure, then served as technical advisor to the engineering team on AWS data pipeline architecture — accelerating delivery by leveraging prior BCBSNC experience with similar patterns. Founded and led the cross-team EKS Working Group, driving Kubernetes architecture decisions and building an enterprise community of practice through influence rather than mandate. Built the observability framework (New Relic with Confluence-linked runbooks and Terraform-provisioned dashboards) that became a company-wide standard. Led SOC 1/SOC 2 compliance from a single inaugural audit to eight simultaneous audits across all products, automating evidence collection and serving as primary technical liaison to external auditors. Delivered the first AI/ML production workload on the Billing platform (AWS Bedrock Data Automation). Drove measurable cost optimization through EKS right-sizing, scale-to-zero workloads, and Karpenter migration.

ACE/IMPACT — Data Strategy Recovery, Technical Advisory & Enterprise Observability

Recovered a critical cross-enterprise data engineering project (ACE/IMPACT) in three weeks of intensive work after the prior owner’s departure — touching every product team and technology stack in the enterprise. ACE (Automated Custodian Extract) processed custodian data from Pershing and Schwab through raw → standardized → curated pipeline stages. IMPACT provided market data and ESG data products with an API gateway layer serving WDP, Finance, and RevOps consumers. Together they formed the core of Envestnet’s Data Strategy initiative and were significant precursors to the Unified Portfolio Accounting (UPA) system.

The recovery earned executive sponsorship and established the cross-enterprise access and trust that enabled every subsequent initiative. But the contribution went beyond infrastructure recovery — served as technical advisor to the engineering team on AWS data pipeline implementation, fast-tracking their adoption of Airflow DAGs, EMR jobs, S3 data lake patterns, Glue crawlers, Lambda, and Step Functions. These were unfamiliar AWS services for the software engineers; prior experience building similar pipelines at BCBSNC (where the same Airflow/EMR/S3 patterns powered the CarePath ML framework) allowed me to accelerate the team’s delivery and shape their architectural decisions rather than just provisioning infrastructure underneath them.

Built the observability framework during the ACE/IMPACT recovery that became a company-wide standard: New Relic dashboards provisioned via Terraform with every monitored metric linked to a Confluence workbook documenting the metric’s meaning, expected ranges, response procedures, and escalation paths. This was not just monitoring — it was operational documentation as code, giving on-call staff actionable runbooks directly from the alerting surface. ACE/IMPACT was the first project to incorporate this technique, and the pattern was subsequently adopted across the enterprise as the standard for new observability deployments.

The legacy ACE/IMPACT pipelines (Lambda, Step Functions, EC2-based EMR, DMS — deployed via CloudFormation SAM in 2022) continue running in DataLake us-east-1 under ongoing operational support. Continue maintaining these legacy workloads with the Data Strategy team while simultaneously building the replacement platform: containerized EMR on EKS in the DataLake us-east-2 clusters. The technology gap between the legacy EC2-based EMR (2022 vintage) and the virtual EMR-on-EKS implementation (2026) is substantial — moving from standalone EC2 clusters with CloudFormation lifecycle management to Spark workloads running as native Kubernetes pods with shared compute, Karpenter-managed scaling, and unified observability. The EMR-on-EKS implementation is a major milestone in the Data Strategy migration, enabling the decommissioning of the legacy us-east-1 infrastructure and completing a four-year arc from emergency project recovery to modern containerized platform.

Data Lake Initiative — Cross-Enterprise Data Integration (Year 1)

Led an organizational influence campaign to extract data from every product group into the central data lake — achieving over 90% coverage across the enterprise within the first year. Approximately one-third of teams collaborated willingly, one-third required demonstrated value from early wins to convert, and one-third required escalation with constructive proposals to management. This was not an engineering project — it was an organizational influence campaign executed without positional authority, navigating politics and building trust across teams with no reporting relationship. Simultaneously served as the dedicated SRE resource for the entire EDI portfolio (15+ AWS accounts across four product lines).

Unified Portfolio Accounting (UPA)

UPA is a portfolio accounting platform using cloud-native technologies for a highly scalable service to maintain, balance, adjust, and validate financial data from custodian platforms and trusted connections to existing platforms like UMP and Tamarac. The system includes EKS with integrations for vEMR (Hadoop), Airflow (MWAA), and custom workflows — containerized microservices managed on a service mesh deployed from managed CI/CD pipelines with IaC-managed infrastructure.

Implemented KEDA (Kubernetes Event-Driven Autoscaling) for workload management on the UPA EKS clusters — enabling event-driven scaling of processing workloads based on queue depth, schedule, and custom metrics rather than static replica counts. This was a major piece of the platform build-out that allowed the system to handle variable custodian data volumes efficiently.

Replaced the default AWS VPC CNI with Calico to resolve IP address exhaustion issues during large scaling events. The AWS CNI’s IP warm pool and refresh rates could not keep pace with rapid node scaling — pods would fail to schedule because the CNI couldn’t allocate IPs fast enough from the VPC subnet. Calico’s overlay networking eliminated the dependency on VPC IP allocation entirely, decoupling pod networking from subnet capacity and enabling the aggressive scaling patterns the platform required.

Implemented scale-to-zero workload patterns using a split node group architecture: spot-instance node groups for worker processes (data engineering and batch workloads) managed by a smaller classic EC2 on-demand node group running the orchestration layer. This technique was brought directly from BCBSNC where the same pattern was used for GPU-enabled spot instance nodes running ML model training — spot for the expensive compute, on-demand for the control plane that manages it. Scale-to-zero kept the UPA platform within budget by eliminating idle compute costs during off-hours, making the infrastructure financially viable on available budgets.

Small and Medium Business Portal (SMB Portal)

SMB Portal provides a platform to empower businesses to make important decisions more easily and take actions based on data. The integrated portal includes the apps that most SMBs use to run their businesses and provides greater opportunity for growth and partnership between SMBs and banks. The software was implemented with cloud-native containerized microservices which was migrated to AWS managed cloud services as part of a migration. IaC (Infrastructure as Code) and conversion from on-premise and alternate cloud vendors was a major hurdle to implementation and bringing the product to a managed state.

Data Science / AI / ML Platform

Delivered the first AI/ML production workload on the Billing platform (AWS Bedrock Data Automation), evaluating multiple approaches and selecting the winner through POC. Built and operated the enterprise SageMaker platform from initial deployment through account migration, creating VPC endpoints across all 4 environments for ML inference pipelines. Managed IaC-managed SageMaker environments via GitLab, provisioned network access from notebook environments to internal services (GitLab, Nexus, Harbor), and operated a dedicated Data Science EKS cluster. Multiple internal data science projects using serverless ML (Lambda/Step Functions) and SageMaker with container deployment mechanisms.

Data Marketplace

External vendor web-application with internal compute and storage for a data management platform in the financial services space. Integration with the internal system via managed processes and managing security concerns were the primary concerns and issues with implementation.

EKS Platform Operations & Migrations (2023–2026)

Led complex EKS upgrades across multiple platforms (Microservices-Accounting, MDS, UMP, Payments, DataLake) through versions 1.28–1.33. The DataLake EKS 1.32 upgrade was the most complex to date — navigating aws-auth ConfigMap deprecation, external-dns migration, and coordinated Airflow helm chart upgrades requiring custom container image rebuilds.

EKS Working Group — Cross-Team Technical Leadership (2022–2024)

Founded and led the enterprise EKS Working Group — a bi-weekly cross-team forum that brought together Platform Engineering, COE, UMP PRO, TAMPRO, DnA/Yodlee, and DevOps teams around shared Kubernetes standards and architecture decisions. Led by influence rather than mandate, modeled on the “birds of a feather” sessions from RFC and standards work earlier in career: every session had a valuable agenda item that gave people a reason to show up.

Drove enterprise-wide architecture decisions through the working group: Calico CNI adoption (replacing AWS VPC CNI), Istio service mesh implementation, ArgoCD centralization and Okta integration, KEDA event-driven autoscaling, Karpenter adoption, Kubecost implementation, and OPA Gatekeeper policy enforcement. Authored technical documentation on each feature area and coordinated EKS version upgrades from v1.22 through v1.30+ across the enterprise, managing breaking changes including Dockershim removal, PodSecurityPolicy deprecation, and ContainerD migration.

The working group succeeded in its mission — the enterprise went from fledgling Kubernetes knowledge to multiple groups of experts who communicate actively. As the community matured and self-organized, the need for the formal working group diminished, which was the intended outcome: build the community, transfer the knowledge, and let the organization sustain it independently.

AWS QuickSight — Revenue Manager BI Platform (2024–2026)

Led the full-lifecycle migration of Revenue Manager’s BI platform from self-hosted Tableau on EC2 to AWS QuickSight — from initial Terraform POC through production deployment serving external financial services clients. QuickSight was not a well-integrated managed service at the time — Terraform support was incomplete, federated authentication was undocumented, and multi-tenant isolation required custom tooling that didn’t exist. Built the solutions for each gap:

Implemented Okta SSO federated authentication with Admin/Author/Reader role mappings. Built GitLab CI/CD automation for cross-account, cross-region QuickSight asset promotion — parameterized deployment pipelines enabling multi-client onboarding with namespace-based tenant isolation. Created IP-restricted client instances for external access. Designed custom vanity URLs (rmanalytics.redi2.com) using the same CloudFront Function redirect pattern later published as a Terraform Registry module. Built cost and usage dashboards for internal visibility.

The migration eliminated hundreds of thousands of dollars annually in Tableau licensing costs while gaining tighter integration with the AWS data infrastructure (S3, Glue, Athena). The platform now serves multiple environments (Dev/UAT/Prod) across regions (us-east-1, eu-west-2 for UK clients).

In 2026, extending the platform with QuickSight embedded analytics — implementing the GenerateEmbedUrlForRegisteredUser API to enable white-label dashboard embedding directly into the Revenue Manager product UI (Quick Suite). This required IAM policy changes across Dev and Prod, permission model debugging for the embedded context, and coordination with the product team on the integration surface. The embedded analytics capability transforms QuickSight from a standalone BI tool into a native component of the Revenue Manager product experience. Additional 2026 work includes Snowflake OAuth connectivity research for expanded data source integration.

EMR on EKS — DataLake Modernization (2026)

Architecting EMR on EKS infrastructure for DataLake platform modernization — migrating legacy provisioned EMR Spark workloads (ACE custodian data normalization, Impact market data pipelines) from dedicated EC2-based EMR clusters in us-east-1 to containerized virtual clusters on the DataLake EKS platform in us-east-2. Implementing Karpenter-based dynamic node scaling with on-demand nodes for Spark drivers and spot instances for executors, Airflow-integrated job submission, scoped IAM execution roles with per-stage S3 bucket access (landing → raw → standardized → curated), EFS shared storage for EMR pods, CloudWatch log groups for Spark job monitoring, and dedicated namespaces with pod templates per environment.

This completes a four-year arc: advised on the original ACE/Impact pipeline implementation (2022), provided operational support for the legacy us-east-1 workloads (2022–present), upgraded the DataLake EKS clusters through versions 1.29 → 1.31 → 1.32 → 1.33, and now building the EMR on EKS infrastructure that containerizes the last remaining legacy workloads onto the modern platform. The technology distance between the 2022 EC2-based EMR (CloudFormation-managed, static cluster sizing, dedicated hardware) and the 2026 EMR on EKS (Kubernetes-native Spark, Karpenter spot scaling, shared cluster infrastructure, unified observability) represents a full generation of data platform architecture.

Security Hardening (2025–2026)

Enforced IMDSv2 across Payments and SalesOps compute resources, eliminating instance metadata v1 attack surface as part of company-wide security hardening. Remediated high-severity Wiz CSPM findings across Billing & Payments accounts (KMS excessive access, service account privilege issues). Restricted Revenue Manager security groups to specific IP ranges, adding defense-in-depth network segmentation. Implemented WAF configurations and CrowdStrike Falcon sensor upgrades across EKS clusters.

Cost Optimization

Cost optimization was a sustained initiative across the tenure, with the largest wins coming from the UPA platform architecture decisions described above: scale-to-zero with spot-instance worker node groups, KEDA event-driven autoscaling replacing static replica counts, and Calico CNI eliminating subnet IP exhaustion during scaling events. Additional wins included Cluster Autoscaler fix eliminating orphaned EC2 instances that accumulated silently, EKS node right-sizing from 4xlarge to 2xlarge across all environments, KubeCost implementation for granular per-namespace cost visibility, Karpenter migration from Cluster Autoscaler for more efficient bin-packing and spot instance management, and decommissioning of unused AWS accounts (Neo4J, upSWOT) to reduce account sprawl and costs.

Migrating legacy RDS clusters and EC2-hosted databases to AWS Aurora Serverless v2 instances — eliminating the cost of provisioned capacity that sits idle during off-peak hours. Built a custom Terraform module to manage the complexity of the migration: the module handles both existing RDS clusters and new Aurora Serverless clusters under a single interface, validating parameters and enforcing configuration consistency as workloads are migrated incrementally. The unified module reduces the risk of misconfiguration during migration and provides a repeatable pattern for each database workload moved to serverless.

SOC 1 & SOC 2 Compliance (2022–2026)

Led a four-year progression in SOC compliance scope that culminated in enterprise-wide ownership of the compliance initiative:

2022–2023 — Ad-hoc Evidence: Provided SOC evidence as an SME in isolation without full audit context, while focused on infrastructure build-out, automation tooling, and extensive documentation to shore up gaps left by departing personnel. Security and compliance background from NC DOR (IRS SafeGuard) and SAS Institute (FDA CFR Part 11) had not yet been surfaced to leadership — the immediate priority was stabilizing infrastructure and consolidating inconsistent practices across teams.

2024 — First Full Audit: Primary infrastructure evidence provider for the inaugural Envestnet Payments SOC 2 Type 1 audit, completing 25+ compliance tasks covering availability, security, change management, data processing, and confidentiality controls. First end-to-end audit engagement where the security background became visible to leadership.

2025 — Eight Simultaneous Audits + Vendor Transition: Led infrastructure compliance evidence collection for 8 simultaneous SOC 1/SOC 2 Type 2 audits across every platform in the portfolio: Revenue Manager, Wealth Manager, BillFin, Payments, Tamarac, UMP, ERS, and WDP. Completed 40+ individual evidence tasks. Navigated a mid-cycle audit vendor transition — half of the 2025 audits were conducted under the incoming vendor’s processes and evidence requirements, requiring adaptation to new auditor expectations, evidence formatting standards, and communication protocols while maintaining continuity across the audits still running under the outgoing vendor. Automated evidence collection from multiple sources, replacing manual processes with repeatable tooling that reduced preparation effort and improved consistency across product areas. Established standardized evidence practices that brought previously inconsistent product teams into alignment — a direct result of both the automation and the new operational controls introduced during the 2025 cycle. Validated controls and provided direct responses to external auditors as the primary technical liaison.

Served as subject matter expert across multiple audit domains including development workflow and SDLC practices, CI/CD pipeline controls, authentication and authorization architecture, operating system hardening and configuration management, DevOps operational practices, and infrastructure security controls. This breadth of expertise — spanning application security, platform engineering, and operational compliance — made the role a natural extension of the security and compliance work from earlier career positions at NC DOR (IRS SafeGuard) and SAS Institute (FDA CFR Part 11).

2026 — Full Vendor Migration + Expanded Scope: All audits now conducted under the new audit vendor with fully adapted processes. Expanding scope to cover all product areas with increased leadership over the compliance process, including defining the audit preparation timeline, managing auditor relationships, and driving remediation of control gaps identified in the 2025 cycle.

Architected and led development of the enterprise data science platform — multi-account AWS infrastructure (EKS, EMR, RDS, Lambda), containerized ML execution environment, and CI/CD pipelines — powering production deep learning models processing claims data from all NC members, emergency rooms, and hospitals under near-real-time requirements. Operated with director-equivalent authority: conducted performance reviews, drove salary increases, executed performance improvement plans, led hiring, and dotted-line reported to the SVP. Managed cost controls across the EKS platform and the team of platform engineers building it — spot-instance and GPU-enabled node groups with scale-to-zero scheduling, activity-based cost tracking, and remediation workflows for developers and data scientists provisioning AWS resources. Delivered the Medicare Guided Selling system as a fast-track serverless deployment (Lambda, API Gateway, DynamoDB) to meet an SVP-driven timeline before the full platform was ready. Built the CarePath ML framework that produced award-winning models for Complex Case Management (ISSIP Excellence in Service Innovation Award), Hospital-to-Home transitions, and cardiovascular/diabetes risk prediction.

Cloud-First Platform (AWS)

Built a complete multi-account AWS platform managed via CloudFormation stacks from the ground up. All aspects of the platform from VPC to IAM/Policy moving towards the higher-level components like shared RDS clusters, EKS clusters, EMR and associated dependent services like S3, ACM PCA, EFS with CSI integration to EKS, Route53 integration with enterprise Infoblox and other services as necessary were all managed via CloudFormation stacks. This had overhead but allowed for a highly consistent and managed cross-account environment working together. This implementation included writing Lambda functions and CloudFormation Custom Resources for several services when CloudFormation did not offer a mechanism to manage a service completely.

Containerized Development (Kubernetes)

Implemented local standardized Kubernetes development with Docker for Windows/Mac and managed AWS EKS Kubernetes cluster per AWS account. Local development initially used docker-compose for deployments to Kubernetes in Docker (KinD) with a migration to Helm3 Charts. The EKS deployments were managed with a shared cross-account CICD Pipeline giving developers full control over build and deployments. Spot-instance CPU & GPU enabled EC2 worker nodes with zero-scaled nodes for GPU to reduce cost allowed for a cost-effective execution platform. The EKS managed Kubernetes and associated AWS resources (EFS/Route53/ACM/VPC/etc) were all managed via CloudFormation stacks (or scripted configuration changes) with synchronized infrastructure deployment environments for development stages. Some of the major inclusions for the K8S clusters were helm chart managed External-DNS, Nginx-Ingress with NLB, Cluster Autoscaler, Nvidia device plugin, Prometheus with resource metrics, KubeWatch and EFS CSI drivers. K8S Service Account (KSA) permissions via AWS IAM Role/Policy at the container level rather than node level offered additional security for managed access to AWS resources. Extensions using AWS Fargate container execution are enabled in a non-HIPAA environment while working out compliance requirements.

Medicare Guided Selling Tool (MGS)

Delivered as a fast-track project to meet an SVP-driven timeline — the AWS platform was not yet ready for general use, so I designed and shipped a complete serverless system in weeks. Built a fully integrated Serverless Framework (SLS) implementation using AWS API Gateway backed with Lambda functions in Python3, providing a REST API for WebUI developers pulling content from a DAX/DynamoDB datastore populated by a custom containerized event-driven ETL process. This public-facing production system went live in June 2019 and served NC Medicare members through 3Q2021. The rapid delivery demonstrated the ability to ship production systems under executive pressure while the broader platform was still being built — and positioned me as both infrastructure architect and first consumer of the platform services the team provided to the enterprise.

Drug Lookup and Calculation (DLC)

The DLC project was an investigation into a standard enterprise-wide REST API that would allow for fast drug lookups via sub-strings for ease of search in a web-ui, validation of drug information with parameters, and cost calculations when member details were available to map against plan benefits. The pilot REST API was implemented rapidly as part of the MGST for 2Q2021 deliverables and then made available across the enterprise as the value was broadly recognized.

CarePath (Machine Learning) Models

CarePath is a software framework developed by Blue Cross NC that acts as a deep learning model factory. It constructs state-of-the-art deep neural networks which learn and then recognize the patterns in sequences of claims which eventually lead to particular health events. This enables Blue Cross to accurately identify members at risk for a wide range of preventable events.

We used this in-house general framework to build specific health related models to identify member level risks and possible health impact to focus our care management programs on those members at greatest risk and improve their health outcomes. Production examples of this include:

- Complex Case Management (CCM) models for predicting both potential hospital initial admissions and separate readmissions after care using claims and other historical data to target members at risk with the goal of improving health outcomes. These two models have had a measurable impact on member health.

- Hospital to Home (H2H) model to identify members at high risk for readmission who need support transitioning from inpatient care to their homes.

- Additional models for Cardiovascular disease, Diabetes

The CCM model was recognized with an Excellence In Service Innovation Award from the International Society of Service Innovation Professionals (ISSIP) and the H2H model with an Innovator Award from Healthcare Innovation.

My role was to validate the framework and models along with assisting in making the code-base production ready. I was also the primary implementer for creating an enterprise production capable platform for the final product. The platform implementation was initially on local laptops and later an AWS EC2 instance. I performed code reviews and changes for the framework source code, individual model data-prep and code, enterprise processes and data management as we moved out of the internal POC. I rewrote sections of the framework and model code to allow migration to scalable cost-effective containerized managed Kubernetes (K8S) deployment using our multi-stage secure cloud-native development platform that provided for a fully self-managed developer experience via a cross-account CICD Pipeline. This allowed for fast cycle times for the development. Further improvements I added included zero-scaled K8S GPU nodes instantiated via resource taint/toleration, scalable and highly available scoring access for the daily public model releases and secure access to managed internal only datastores for the model generation.

Administered a 25-node SAS Viya in-memory analytics cluster (26TB RAM) with connectivity to a 50+ node Hadoop data lake approaching 1PB, operating on a closed network under NIST 800-53 high security controls with DEA data hosted. Worked directly with the USPS Chief Data Scientist and his team of data scientists, providing platform engineering, data engineering, and machine learning infrastructure support — including building custom data acquisition modules that gave the data science team access to production- quality geospatial and operational datasets they couldn’t obtain from existing sources. Stabilized platform operations, built CISO-compliant administration automation (Python Fabric, Ansible), and constructed a complete Hadoop development platform replicating production for upgrade planning.

Advanced Visual Analytics — SAS Viya

Stabilized the SAS analytics platforms for the data scientists group. Administered, documented, and configured the in-memory clustered analytics platform across a 25-node cluster with 26TB of RAM and remote connectivity to a 50+ node Hadoop DataLake. Limited documentation from the initial installation was a priority to remedy immediately. Stabilization of services and a plan for platform upgrade became the next concern. Technology stack included Ansible 2.2.1/2.3.2 automation for platform installation and configuration with a custom Python Fabric 1.14.x extension for system administration automation and management, providing a standardized method for administration tasks. R and Python 2.7/3.6 were used extensively for scientific computing by data scientists, with platform support provided for both. A heterogeneous Linux platform including RHEL, SUSE, and CentOS comprised the base OS. Integrated with multiple LDAP and Kerberos directory services for authentication and authorization. Built SAS Visual Analytics (VA) proof of concepts and demos for the data science team.

Analytics Programming Platform — SAS 9.4M5

Stabilized the SAS 9.4 programming platform and produced administration run books and best-practices documentation for UNIX sysadmin staff. Configured the programming platform for remote connectivity to the Hadoop DataLake, TeraData, and Oracle. Reverse-engineered and documented the existing installation. Integrated R and Python with scientific and machine learning libraries. Produced an upgrade plan for the platform.

Python Fabric — Admin Automation Framework @ USPS

Built a CISO-compliant system administration automation framework using Python Fabric at the USPS. Demonstrated the framework to system administrators and extended it for multiple services, providing a standardized and auditable method for administration tasks in the high-security environment.

Hadoop & SAS Integration — HIVE / HDFS

Implemented HIVE and HDFS integration with multiple LDAPS authentication providers for HortonWorks Hadoop and SAS Platforms. Integration methods included customized PAM/SSSD with work towards Kerberos integration.

Hadoop Development Platform

Built a complete Hadoop DataLake platform using HortonWorks Ambari to replicate the production USPS DataLake as a test environment for preparing an upgrade plan across multiple interdependent production services. The platform incorporated USPS CISO standards and utilized a beta local USPS IaaS Cloud (early adopter) offering based on the VMware vRealize Suite. Created a custom combination of Linux-based OpenLDAP and Microsoft AD for authentication and authorization to replicate production Hadoop DataLake complexities. The base OS used RedHat Linux 7.4 with USPS OS extensions. Implemented an NFS server to provide both a system-level distributed shared file system for HA and a shared home directory for PAM/LDAP-based UNIX accounts.

Data Science Engineering Support

Served as the bridge between the data science team and the data they needed for logistics and delivery optimization models. The existing GIS data available on the platform was insufficient resolution for models that depended on precise geographic coordinate placement — summarized data points rather than actual state and county boundary polygons. Built a Python module to extract high-resolution boundary data from ArcGIS via API, transform it into formats consumable by SAS and Viya, and load it into the analytics platform for the data scientists. This gave the logistics modeling team production-quality geospatial data that their models required but that no existing internal source could provide at sufficient resolution.

This was representative of a broader pattern in the role — identifying data gaps that blocked data science work, then building the acquisition and transformation pipelines to fill them. Multiple similar studies were conducted to source, transform, and deliver operational datasets that the data scientists needed but couldn’t access through standard channels. The role was not just platform administration — it was data engineering in direct service of the Chief Data Scientist’s team, ensuring they had the right data at the right resolution to build models that informed USPS delivery operations.

Served as cloud evangelist bringing AWS into an organization that was entirely data-center and vendor-hosted, overcoming significant resistance from data center engineers who feared cloud adoption would eliminate their roles. Coached the existing engineering team through the transition — demonstrating that cloud is infrastructure with automation built in, not a replacement for engineers, and that upskilling into scripting, IaC, and automation was the path to staying relevant. That investment in people was as critical to the migration’s success as the technical architecture. Built the AWS platform from nothing to a fully functional managed environment hosting AKC’s primary website and marketplace. Completed zero-downtime migration of akc.org in six weeks and marketplace.akc.org in two weeks, reducing hosting costs from $30,000/month to under $5,000/month while improving uptime and response times. Designed and implemented a complex three-way Active Directory synchronization across AWS, the on-premises AKC data center, and Office 365. Led application modernization from legacy ColdFusion and Perl to a MEAN stack with end-to-end CI/CD.

Cloud Services and Architecture

Oversaw the design and execution of the cloud computing strategy including cloud adoption plans, cloud application design, and cloud management and monitoring. Provided expertise in the definition, design, implementation, adoption, and adherence to enterprise architecture strategies, processes, and standards.

Rebuilt and redesigned the primary www.akc.org website to use Amazon Web Services in a rapid migration from a hosted vendor environment. A lack of existing documentation, standards, and procedures for this rapid migration had impeded earlier efforts at a seamless transition. No customer impact for the migration I led and completed in a break-neck six-week period. A similar exercise for a large consumer transaction website marketplace.akc.org was completed in a period of two weeks with a contracted partner assisting in the effort. Again, done with no customer impact for these transitions.

Reduced overall website costs from approximately $30,000 USD per month to less than $5,000 USD with plans for additional reduction to bring total under $3,500 per month. Improved uptime, reduced TTFB (time to first byte), and reduced variability of web page responses. Continued improvements for caching, database optimization, and deep application monitoring further improved user experience.

Implemented end-to-end CI/CD for the primary website with additional integration testing frameworks to improve developer effectiveness and increase the rate of deployments.

Led application modernization from legacy ColdFusion, Perl, and Java applications to a MEAN development stack utilizing AWS for the new environments. Solutions for the legacy migration included Amazon EMR, ELK log management, ElastiCache, CloudFront, Beanstalk, and various other AWS services. Impediments included a monolithic Oracle database structure and legacy desktop applications accessing the same data in real-time.

Programmer

Built an Amazon EMR implementation for the Application Modernization effort. Implemented ELK log management for individual application monitoring and management.

Evaluated website architecture and developed plans for implementing opcache with php-fpm to accelerate processing of dynamic PHP content. Redis and Memcache implementations were not optimized for short-span session content.

Rewrote sections of the PHP-based Expression Engine 2.9.3 (CMS) to be fully compliant with PHP 5.6 enabled with warnings as errors. Reduced load times on scripts noticeably.

Redesigned the Varnish caching server implementation, rewriting HTTP session and redirection rules. The existing design allowed for replay of session actions for invalid responses.

Addressed redirection/rewrite rule proliferation — six locations for a rule to be enacted with the possibility of looping redirections. Built a separation of rule evaluation with logging into an ELK implementation to understand the scope of the challenge. The existing implementation had over twenty-six thousand (26,000) rules.

Designed a method for domain root flattening that did not include AWS Route 53 but remained highly available.

Software Architecture

Led Hybrid Cloud work with Azure/Amazon integration, designing a software architecture for a new service-based platform using a microservices implementation and a serverless POC. Merged Amazon to a local data center network hosting multiple data sources including Oracle and MongoDB. Managed both a local MongoDB instance in the data center and implemented a MongoDB Atlas managed service that reduced engineering overhead, costs, and increased availability — Atlas instances could be rebuilt rapidly for engineering evaluations and investigations where the local and EC2-based instances were significantly heavier to provision for testing. Managed the intelligent load balancer transition and rules implementation across multiple isolated networks.

Provided architecture and implementation for modernizing the interactive web application platform to a MEAN (JavaScript) development stack with a DevOps workflow pipeline supporting multiple development workflows.

Designed a Hybrid Headless WordPress implementation for future merged and integrated public-facing websites, including a highly scalable AWS service-based implementation.

Deployed updated and enhanced GitLab, Phabricator, SONAR, Ansible, and Jenkins platforms in AWS for existing development with extensions using AWS-specific tools for IaC (CloudInit and CloudFormation).

Led enterprise-wide technology strategy for North Carolina state government in a dynamic environment with four direct-manager transitions, including a period of six months reporting directly to the State CIO (future Secretary of IT). Authored the Request for Information (RFI) for statewide data center network modernization and co-authored the Request for Proposals (RFP) with the State Risk Officer for a security standards rewrite based on NIST and DoD frameworks. Evaluated and responded to RFCs, policy and controls requests, and led program management for cloud technology adoption across Azure, OpenShift, and direct AWS engagement. Leveraged deep cross-agency relationships built over years as a state employee (NC DOR, UNC System, Community Colleges) and worked closely with the CISO on compliance and security initiatives — cross-domain knowledge from IRS audit and security background proved essential for moving programs and projects forward across organizational boundaries.

Architecture

Accountable for developing, maintaining and overseeing the execution of formalized technology, application, platform, and systems integration strategies. Conduct enterprise-wide analysis, collaboratively establish technology road maps, champion critical changes and negotiate statewide standards and policies in the form of Enterprise Architecture. Ensure the successful development and effective execution of IT strategies. Systematic management of enterprise IT standards, policies and strategies for a portfolio of technology platforms, products, and practices.

As part of the evaluation during the Network Modernization program, clear issues with the existing security policy and state-wide architectural standards emerged. In cooperation with the State Risk Officer, we are releasing a Request for Proposals (RFP) for a rewrite of the security standards with an emphasis on modernized practices based on NIST and DoD standards.

Plans for revisions of the state-wide architectural standards began with an outline of critical sections requiring position papers for new technologies. I began revisions of the firewall, hosting and n- tier architecture completing drafts.

Cloud Initiatives

Investigation of statewide data center Cloud usage with external and hybrid Microsoft Azure VM (compute) services. Also involved in work done with RedHat OpenShift (backed by AWS VMs) and a direct Amazon engagement.

Ensure the successful development and effective execution of IT strategies. Manage enterprise IT standards, policies and strategies for a portfolio of technology platforms, products, and practices. Initial focus on converged technologies with focus on network modernization with software defined networks (SDN).

SDI (software defined infrastructure) strategy for the state with the SDS (storage) SDN (network) VM (compute) and orchestration of the services.

“Utility-based computing” for state agency usage with both a technical and financial model

Virtual Desktop (VDI) for state desktop replacement (infrastructure and financial). Includes external cloud based services for cloud desktops. This includes network analysis of the services.

Networking

Assist in developing and defining a program for the modernization of the State of North Carolina data center networks. Refined the existing understanding of network infrastructure by multiple stakeholders into a document outlining the known state of the data center networks. The current network is overly complex with many features that are static and resist rapid change or automation. The configuration of the individual network segments and network services lack in standardization which results in complex configurations prone to failures during change events.

In response, lead a group in writing a Request for Information (RFI) to ask third parties to assist the State in understanding how to modernize our data center networks to embrace new concepts such as cloud, third party hosting, mobility of services and incorporate flexibility into the State network to allow for adding new methods of doing business.

As part of the evaluation during the Network Modernization program, clear issues with the existing security policy and statewide architectural standards emerged, leading to the RFP for security standards rewrite described in the Architecture section above.

Originally hired to build data center infrastructure for an ML moonshot project — automated essay assessment for school system end-of-year exams — and rapidly pivoted to an AWS-hosted solution. Built a custom scalable compute and monitoring platform with a team of engineers that scaled to thousands of first-generation spot EC2 instances for ML scoring workloads, then scaled down. Owned all costs and cost controls across the platform (FinOps before the term existed). Scope expanded from systems administrator to systems programmer to cloud architect to machine learning engineer: optimized low-level ML libraries (CBlas, LPSolve, Shogun), ported the ML Toolkit to native Windows, and contributed patches back to open source communities. Also built a private cloud platform (Eucalyptus 3.4 replicating AWS services) with iSCSI shared storage for on-premises workloads. Concurrent with Georgia Tech MS coursework in machine learning — SVP Dr. Kirk Ridge provided the recommendation letter and support that enabled entry into the OMSCS program.

AI Technologies

Implement a custom MIT Star Cluster for distributed computing to a combined AWS and Eucalyptus environments.

MXE – cross platform compilation of tools and libraries necessary for AI & ML tools. Some of those include: gcc and associated toolchain, ATLAS, LPSolve, CBlas, Eigen, ColPack, ARPrec, Ccache, LAPack. Patches and modifications contributed back to open source communities. SHOGUN is ported to native Microsoft Windows platform using the above MXE cross- compilation environment. Porting of Machine Learning Toolkit (MLT) to both a 64-bit Cygwin environment and native Linux packaging.

Analytics Server – Built a shared analytics server providing Octave, R, Shiny, rStudio Server, SAGE Notebook / IPython Notebook, and MySQL for the AI researchers. This was an early data engineering platform built to supplant the existing SAS installation and provide a shared environment for the data science and machine learning teams — predating formal fluency in AI/ML practices but applying the tools, utilities, and platform patterns learned along the way to support the machine learning work. Developed an interactive interface and shared environment for use between applications.

Cloud Computing

Advise software development teams on architecting and designing infrastructures that safely and efficiently utilize a cloud computing environment. Amazon Web Services (AWS) investigation for use in distributed high performance AI computing workloads. Manage AWS account activity, project usage and billing.

AWS development stack includes Java, C#, Python, Groovy (Grails), Perl, and C. Built AWS DotNet SDK in Mono on Linux and developed proof of concept examples for evaluation. Developed a Grails S3 web browser application as proof of concept. Contributed to open source Eucalyptus 3.3.0 project.

Eucalyptus 3.4 implementation with iSCSI shared storage platform (FreeNAS) in multi-tier platform to provide local services providers of AWS Services including: EC2, EBS, IAM, S3, AMI, Autoscaling, Elastic Load Balancer, and Cloudwatch. Implemented test platform for S3 local service utilizing Ceph & RiakCS integrated with Eucalyptus 3.4 IAM. Extended a local SNS implementation using Scala in-memory implementation. Extended a local SNS stub provider for mock providers of web service.

Investigated OpenStack, CloudStack, and AWESOME technologies for AWS compatibility with mixed results. Each had areas of excellence but Eucalyptus 3.4 mapped the greatest number of AWS SDK features necessary for distributed scoring platform.

Machine Learning

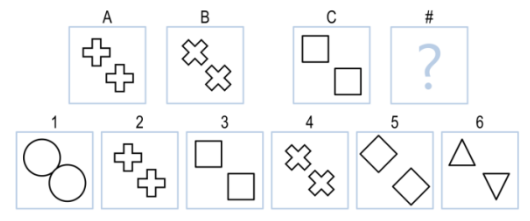

Implement in Python a knowledge-based AI agent using generic learners to find solutions to the Raven’s Progressive Matrices visual intelligence test. Applied machine learning from natural language processing (NLP) methods to the image recognition and pattern recognition in generic non-domain specific attribute based evaluations. Implementation involved both identification of shape matching and transformations of shapes between frames. Weighted models of attributes or feature values were used to determine features that impacted identification of important transforms.

A simplified example of the proposition is to solve A is to B as C is to # from the figure below. Human cognition will quickly identify figure 5 as the solution with a 45° rotation of the shapes. An AI Agent requires identifying the mapping between shapes in A & B then the transforms performed on B to make them match A.

Software Defined Networking

Python implementation of several network simulations to model and test changes using a custom built Python, Mininet and Linux virtual machines environment. The projects included building:

- A learning switch with a customized weighted finite state machine (FSM)

- A combined firewall and intrusion prevention system (IPS) using an OpenFlow controller and sFlow monitoring

- A TCP Fast Open implementation using original Google research paper and IETF RFC 7413

- Lastly a Multi-Path TCP (MPTCP) implementation using Linux kernel modifications and TCP stack updates using IETF RFC 6824 as a recommendation for implementation

Brought these projects back to the company network to assist in work on production networking challenges for our large scale web services deployment. The modeling of latency issues for remote education institutions in rural Canada. TCP Fast Open for Chrome has had impacts on server builds for our flagship web-based testing platform. Network modeling is being used as other network issues are encountered.

Virtualization Technologies

Implemented Xen and KVM virtualization environments that supported both Linux and Windows virtual machines to provide a reliable environment for development and investigation of technology. Progression of investigation migrated to the Eucalyptus cloud environment.

Performed hardware maintenance for existing servers, including equipment replacement and capacity planning. Researched alternatives and implemented a VMware ESXi 5.5 server purchasing hardware to allow for migration of legacy applications and servers from physical to virtual machines (P2V). P2V conversion process included legacy OS environments.

Implementation of ESXi server included integration of AD permission models with developer access to virtual machine management. Windows 2003/2008/2012, Red Hat Enterprise Linux, CentOS and Ubuntu virtual machines are all supported in the environment. An advanced understanding of IP, Sub-Nets, VPNs, vLAN, Network routing, firewalls, load balancing and switching as related to the VMware platform were required for implementation. Storage configuration includes iSCSI both in client and on ESXi software. Troubleshooting of ESXi server and virtual machine related performance issues was performed as necessary.

UNIX Systems Administrator

Implemented Kerberos and LDAP integration in a combined Windows & Linux environment to support shared environments. Linux based integration uses a customized PAM configuration with LDAP & Kerberos providing standardized authentication and authorization (A&A) for Linux services. This allows for single sign-on from the users primary Windows AD accounts. Production environments are primarily Ubuntu LTS but support for other Linux versions are provided as required.

Upgrade several production Ubuntu systems from legacy LTS releases to 12.04 and planning migration to LTS 14.04. Implemented Linux Desktop initiative bringing several users into the Linux environment providing support for email, file sharing, development, source control, and integrated A&A. File sharing incorporates Samba 4 integration. Likewise, support for CentOS & Red Hat Enterprise Linux as required by projects.

Windows Systems Administrator

Microsoft Windows Administration of services including: Active Directory, RADIUS, Kerberos, LDAP schema extension and DHCP/DNS integration. User and Group account administration. Develop procedure for provisioning of new employees (onboarding) and exiting of employees. Document UNIX tools integration of UNIX services for windows including Xming, PuTTY, and WinSCP software.

Upgrade and document the CruiseControl.Net Continuous Integration (CI) build server used across the Software Products division. Refactor configuration to reduce local settings in build scripts. Update software versions for all modules and dependencies. Document installation process and known issues.

Plan migration of Active Directory from Windows 2003 to Windows 2008 R2. Procure hardware for migration.

Network Administrator

Migrate Checkpoint DHCP services off device to AD. Migrate private IP ranges to expand addressable space for separation of services: servers, network, cloud, and desktop devices. Manage and configure HP ProCurve switch and F5 Load Balancer. Implement evaluation Nagios monitoring across Linux and Windows services.

Researched available software tools for replacement of CheckPoint VPN services. Evaluate a OpenVPN-ALS SSL-VPN solution with Apache reverse proxy and shared certificates with Java applet clients and the Guacamole Clientless Remote Desktop integrated with Active Directory for use in a secure environment.

Database Administration

Identify, recommend, and implement new database technologies for distributed AI Scoring System. Responsible for MySQL and PostgreSQL logical and physical database design, implementation, and maintenance on Linux platform.

Create new databases and users: set up backups, export, and other monitoring scripts as necessary. Provide support for database maintenance and disaster recovery across both Windows and UNIX environments.

Involved in all phases of database development, from needs assessment to QA, design, and support. Migration and upgrade of SQL Server 2008 to 2008R2 for legacy systems. Built and maintained SQL Server 2012 servers for use in Streaming Scoring web service and distributed AWS processing platform. Technology investigation of Hadoop for use in model building.

Administered validated systems for the pharmaceutical industry under FDA CFR Part 11 compliance — not standard systems administration but validated systems administration where every action is auditable, every installation documented, and every change request traceable for federal audit. Designed multi-tier SAS architectures for 80+ customers in unique configurations, including the first production Disaster Recovery capable multi-tier SSO system for a clinical trial customer (7 servers + 8 load-balanced terminal servers). Automation-first approach using Puppet, scripting, and SAS product customization to ensure consistency across validated environments. Completed all six available SAS certifications in six consecutive months — one per month, the fastest anyone had accomplished this in any division at SAS: Platform Administrator, Base Programmer, Clinical Trials, Data Integration, BI Content Developer, and Statistical Business Analyst.

Disaster Recovery Implementation

Designed the first production Disaster Recovery (DR) capable multi-tier SSO system for a clinical trial customer. This included a SAS Drug Development (SDD), Clinical Data Integration (CDI), a custom web application server with multiple custom applications and Axway Cyclone server for a total of seven servers along with a bank of eight load balanced Windows Terminal server client systems. The mirroring allowed for a full replication of the OMR (metadata), Oracle (database), file-systems and external transport along with all software client configurations being mapped across every defined period. Extensive scripting and architecture work were required to accomplish this comprehensive solution.

Development

Design, develop and train users on a custom extension to DI Studio allowing for lookup and harmonization processes to be managed for a non-SAS Content Server by clinical trial end users. Wrote design specifications for management of metadata path information in OMR for Xythos servers.

Perl, Java, Clojure, shell script, SAS Base and SAS Macro programming as necessary to implement business solutions and automation for customers across the range of SAS Solutions.

VMware ESXi with vFabric tcServer integration to SAS Drug Development 4.2. Built a VMware ESXi 5.1 VM only system for a full three-tier platform as demonstration of technology.

Documentation

Write documentation for repeatable processes and run books to allow for other team members to support systems. Update validated installation and maintenance documentation. Review and update over one thousand Change Requests (CR) in support of audit compliance for customers.

ITIL

Designated to evaluate “Service Operations” and “Service Transition” as pertains to the SAS operations. Reviewed existing processes and documentation and provided feedback on changes to facilitate the transition to ITIL best practices for validated systems environments.

Certification

Completed all six available SAS certifications in six consecutive months — one per month, the fastest anyone had accomplished this in any division at SAS:

- SAS Certified Platform Administrator for SAS 9

- SAS Certified Base Programmer for SAS 9

- SAS Certified Clinical Trials Programming Using SAS 9

- SAS Certified Data Integration Developer for SAS 9

- SAS Certified BI Content Developer for SAS 9

- SAS Certified Statistical Business Analysis Using SAS 9

SAS Platform Administration

Provide for all SAS Solutions as required for customer requirements. Design, install and manage multi-tier SAS architectures for over 80 SAS customers each in unique configurations. These are mostly three tier environments that include a mid-tier (web), compute-tier (SAS), and storage-tier (database).

Configurations designed included LSF Grid implementations, load-balanced mid-tiers(web), load-balanced compute-tier(SAS) and variations on these configurations. Design of the plan and architectures are part of the Platform Administrators role at SAS.

Validated Systems

Primary responsibility included expert level response for SAS Drug Development (SDD), SAS Clinical Data Integration (CDI), and Clinical Trial support services. Perform validated installations for systems participating in clinical trials to meet CFR Part 11 Compliance. Practices for performing all actions in the validated environments are reviewed as pertains to audit-ability. This documentation intensive task is restrictive but provides traceable action for all activities in the environment as required by FDA.

SAS Fraud and Retail Systems

Transitions to a flat model of support for Platform Administrators included installing and managing non-Validated environments. This included SAS Fraud Framework and SAS Retail Solutions. Brought across the validated documentation mind-set to the non-validated solutions and implemented repeatable installation processes and run-books for standardized management.

Systems Administration

Administer operating systems for Microsoft Windows Platforms to include Servers from 2000, 2003, and 2008 in both an old style NT domains and Active Directory (AD) directory service with integration services for UNIX using LDAP(SSL) and Kerberos authentication. Windows platform integration with SAS Metadata Server authentication and authorization.

Administer UNIX operating systems to include RedHat Linux, Oracle Linux, IBM AIX, Oracle/Sun Solaris, and HP-UX. Integration with authentication and authorization services from Microsoft platform and independent A&A services. Evaluate cfEngine and assist with final Puppet implementation for SDD 4.2.

Local infrastructure support for backups, storage, database, patching, auditing, networking, and all other administration tasks were supported on an as needed basis. External Remote Managed Service (RMS) offerings included support for external infrastructure as provided by customers. Database administration tasks as required for Oracle, MySQL and PostgreSQL.

Grew from firewall administrator to policy and controls leader over four years, rapidly expanding security scope from IC work to contributor on nationwide projects and multi-state initiatives. Skip-level reported to the Secretary of Revenue for half the tenure, taking direction from the top tier of the organization. Part of the five-person team that passed the IRS SafeGuard audit — a seven-month preparation producing a 1,300-page report covering all IT infrastructure, with no noteworthy findings (acknowledged by IRS auditors as a significant achievement). Loaned to the IRS for Congressional subcommittee work (ETAAC) developing third-party tax preparer security standards based on NIST 800-53 controls adapted to IRS Publication 1075. Integrated security reviews into every step of the SDLC, reducing rework costs and improving PCI and IRS compliance. Applied NIST 800-53, FISMA, FIPS 140-2, PCI-DSS, and ISO/IEC 27002 frameworks.

Project Management

Perform multiple roles in project management. Perform and prepare feasibility and risk assessments, gather business requirements, develop project plans, organize, manage and allocate resources, and monitor and control progress. Participate as a key stakeholder in multiple critical agency projects. Primary goals as a security professional are to manage risk, provide impact & probability analysis while tracking progress on projects.

Other projects as a core team member: Online Filing and Payment (OFP), Fuel Tax Services (FTS), IRS Secure Data Transfer (SDT), Financial Institution Data Match (FIDM), Financial Institution Record Match (FIRM), NC3 eFile, Network Segmentation (NS2), Wireless 802.11 assessment, eDORSA (FTI data access reporting), ITIL Configuration and Asset Management lead for IT Security, Taxpayer Kiosk assessment, IPSec to SSL VPN conversion, Network Controls assessment, PKI assessment, and yearly legislative tax update review.

Tax Information Management System (TIMS) project member for numerous committee and functional groups planning for the replacement of the existing mainframe based tax administration system. Engaged in the RFI (request for information), RFP (request for proposal), and contract review process prior to project initiation. Active reviewer and documenter of security requirements. Provide rapid risk assessments for evolving systems. Certify compliance to state, federal and PCI standards.

Development

Software Development Life Cycle (SDLC)

Document development processes and provide a detailed assessment to close gaps in policy before November 2007 IRS audit. Assessment implementation included the addition of manual and automated code reviews for web development. Defining coding best practices and coding standards improved code quality. Addition of web application vulnerability scanning improved PCI and IRS compliance.

Actively promoted the removal of administrative and root access for all development staff. Removal was done to improve development processes and segment activities between groups. Maintaining the subset of access necessary to perform software installation in non-production and provide a standardized build environment improved software quality.

Promoted the replacement of legacy Microsoft SourceSafe source control system with the open source Subversion and associated reporting and administrative tools. Implementation allows for the addition of technical writers, business users, development and testers access to documentation and software. Defect tracking and source code changes are linked and related.

Security reviews and sign-off were integrated into each step of the DOR SDLC process from business requirements to the completed production release. Integration of security reviews and sign-off into each step increased compliance and decreased re-work costs from the prior methodology.

Audit

Manual code reviews for multi-tier J2EE web applications in WebSphere and JBoss and vulnerability testing both automatic and manual performed as part of the security review process for all web applications. SPI WebInspect, custom Perl scripts, WebScarab, Metasploit extensions, and manual testing were performed for all web applications released. Local desktop java applications are also reviewed as necessary.

Review Perl and shell script automation for UNIX systems. Prototyping in MS Access for data imports, reporting and data analysis reviewed. C code written and reviewed for several custom developed components as necessary.

Programming

Wrote the complete specification for the automation of an authorization tracking system called EDORSA. Designed an automated system to incorporate business rules, automated approval routing, integration with SAP system (NC BEACON), and ITIL CMDB. The agency FTI data access tracking and reporting system is critical to federal audit requirements.

Committees

ETAAC: A member of the Electronic Tax Administration Advisory Committee (ETAAC) sub-committee on computing security that reports to Congress annually. The ETAAC provides for discussion of electronic tax administration issues in support of the goal of paperless filing. Provided input into third-party tax preparer security standards based on moderate level controls from NIST 800-53. A Congressional report applied these controls as a baseline.

IRS TAG-SS: Active participate in IRS Tactical Advisory Group Security Sub-group (IRS TAG-SS) which is a partnership between the IRS and state taxation agencies enabling state agencies to have input into the IRS Publication 1075 (1075). The 1075 are security guidelines to provide safeguards for protecting federal tax information.

FBI InfraGard: Participated in both the national Cyber Storm II and III for NC Dept of Revenue. Maintain membership in good standing and review varied information provided to membership.

Security

Risk Assessment & Vulnerability Management

Write vulnerability and risk assessments for agencies both on requests for new projects and from managing existing infrastructure risks. Engage with technical staff in the implementation of mitigating controls for vulnerabilities assessed. Report monthly to senior management on outstanding vulnerabilities and risks for the agency as well as yearly summary.

IRS SafeGuard Audit (Dec 2007)

Part of a five person team that passed the IRS SafeGuard’s audit for the NC Dept of Revenue. This federal audit was a significant assessment requiring seven month preparation and a delivered report of over 1300 pages covering all aspects of the IT computing infrastructure for the agency. The lack of noteworthy audit findings was acknowledged as a significant achievement by the IRS auditors. Continue with yearly updates on the original report while implementing the TIMS mainframe replacement project.

NC State Auditor’s Office

Provide the NC Auditor’s Office with information as required for yearly IT audits. No significant findings from these audits. Symantec Security Services provided an assessment for use by the Auditor’s Office in 2006. This comprehensive report and findings including vulnerability scans of internal networks and services found minimal issues. Some findings required architectural modifications and continue to be mitigated and resolved.

Policy Frameworks

Utilize several security frameworks including IRS Publication 1075, NIST 800-53, ISO/IEC27002, PCI-DSS, FIPS 140-2, NC STA (North Carolina Enterprise Technical Architecture Standards), and other varied standards as appropriate. Frequently reference Department of Defense (DoD) standards with moderate level controls. Apply new standards, policy and statues as appropriate. Designated as the primary liaison to the NC Attorney General’s Office — a role that carried significant additional workload but was essential for moving security and compliance matters forward. A well-rounded background in legal language and the ability to translate between technical and legal requirements made the liaison role effective.

ITIL

Active member of the IT FIT (Information Technology Framework for IT services) as part of the ITIL (Information Technology Infrastructure Library) business and process analysis. Active in Incident, Change, Configuration, and Asset Management process reviews. Core member for Incident process review of BMC Remedy implementation for the existing DOR HelpDesk functions. Part of the Pink Elephant 2007 and HP 2009 assessments for agency ITIL maturity.

Administration

Microsoft Windows

Windows systems administration and troubleshooting across a diverse set of systems including Windows 2000 thru the most current Microsoft operating systems. Active Directory, Kerberos, and LDAP (with SSL) integrated authentication and authorization for cross-platform single sign-on is a main focus along with a secondary role in application deployment, patch management and desktop policy enforcement. The new TIMS system increases the Windows server environment significantly. VMware ESX 4 was utilized to allow for rapid deployment of new servers.

Database

Oracle, IBM DB/2, Sybase, TcX MySQL MS SQL Server and MS Access are all used in the agency and assessed for compliance to relevant security standards. Review the configuration and information management for each individual system and database. Oracle dominates the new environment with DB/2 dominating the legacy. Ad-hoc projects in MS Access with business users allow for prototyping and writing solid specifications to be migrated to other database systems.

UNIX

Audit and provide assistance managing Novell SuSE Enterprise Linux, RedHat Enterprise Linux and IBM AIX 5L and 6L series for the agency. Review scripting in Perl, Python and various shells for automation tasks. Audit for security policy compliance and assist in bringing systems into compliance. Limited evaluation of VMware ESX 4 for Linux hosts.

Network and Firewall Systems Admin

Management of combined Cisco and Nortel switches, routers and firewalls. Access both GUI and CLI interfaces for audit and review on a periodic basis. Review and certify all firewall rule changes. Assist developers and network administrators in meeting business requirements while meeting security standards for network configuration requests and changes.

Environment management includes multiple public exposed DMZ zones that include load balancing, SSL acceleration, IDS/IPS and complex firewall rule-sets. Nortel Alteon, Nortel Passport 8600, Nortel 5520, Cisco ASA 5500 series and a Cisco NAC are some of the network equipment in the environment.

Review and respond to NC ITS Foundstone scans and alerts. Manage local WebSense URL monitoring system.

Maintained on-call status with a cellular modem and laptop for immediate rapid response to emerging security incidents. Actively monitored hundreds of security feeds on emerging threats and vulnerabilities, providing early warning and risk assessment for the agency. This was challenging but fulfilling work that required constant vigilance and the ability to distinguish signal from noise across a high volume of threat intelligence sources.

As a member of the Electronic Tax Administration Advisory Committee (ETAAC) subcommittee on computing security, I provided significant input into third-party tax preparer security standards based on a subset of the moderate level controls from NIST 800-53. This furthered the mission of the ETAAC who reports to Congress annually provides for discussion of electronic tax administration issues in support of the goal of paperless filing. The final Congressional report applied these controls as a baseline for this industry. 2009 ETAAC Annual Report to Congress

Consulted on all aspects of IT including hardware, dental software, and HIPAA/policy compliance for a private dental practice spanning over a decade. Managed the technology lifecycle from initial infrastructure buildout through the owner’s retirement in 2011 — including final data transfer and secure closeout during the practice sale. Long-running engagement held concurrently with full-time positions at NC DOR, SAS Institute, BD Biosciences, NC Community College System, and others.

Evaluated networking and server infrastructure needs for a virtual reality technology company. Provided programming expertise for web services, extended a custom credit card verification system, and provided database administration support. Part-time engagement held concurrently with full-time positions at NetIQ, Hosted Solutions, NC Community College System, and BD Biosciences.

IT Director for the BD Treyburn manufacturing plant — sole IT leader managing all IT services, budgets, and one direct report with dotted-line reporting to the BD CIO and direct reporting to the plant manager. Owned costs and financials for all IT operations. Navigated a complex environment spanning an Apriso MES implementation with custom Oracle/SAP integrations, Genesis SAP deployment, phone system and network replacement, and medical device standards compliance. Led the Windows NT domain to Active Directory migration and SMS 2003 deployment. Integrated Allen & Bradley ControlLogix PLCs with manufacturing floor automation systems. Leveraged Lotus Notes and Domino experience from Ziff-Davis to manage BD’s email and collaboration platform for 200+ users. Trained in BD Project Management Mastery (PMM) and Six Sigma. Built on the multi-site management experience from NC LIVE to lead a manufacturing plant IT operation in a regulated industry.

System Programming

Maintain existing systems including a report generation system, and CAPA incident tracking system.

A new barcode label printing system critical to manufacturing of pipet production line was implemented in Visual Studio 2005 and SQL Server 2005. This same system is being designed to later incorporate all label printing including Tissue Culture and Tube product lines.

Project Management

Begin training in BD PMM (Project Management Mastery) program in preparation for PMP (PMI certification). Three major projects (one being a Six Sigma greenbelt).

Explore the feasibility, gathered requirements, and determined project plans for two infrastructure upgrades. Review design of existing stalled projects and provide a written project plan to close them out or bring them back online.

UNIX Systems Administrator

Debian (on Sun UltraSPARC) server for use in network management, automated backup services, and basic system automation services. Solaris 8 system conforming to BD standard used for automation systems.

Allen & Bradley PLC Programming

Training in ControlLogix and RSLogix to allow for extracting data points from PLC (Programmable Logic Controllers) from automation complexes on the manufacturing floor. MicroLogix and SLC controllers are also used and learned in independent study.

RSMACC implementation started for two PLC complexes. Implementing under Active Directory. Implementation may be converted to a new AB product line called Asset Manager.

Microsoft Windows Systems Administrator

Administer Windows NT 4.0, Windows 2000 and 2003 servers. Decommission Windows NT 4.0 domain and migrate to Active Directory. Implement SMS 2003 for all server and desktop systems for software installation and patching. Services on servers include web servers, File and Print services, DHCP, SMS, Oracle, FlexNet MES, and Lotus Notes. Audit of software and hardware.

Oracle Database Administrator

Apriso MES (Manufacturing Execution System) running on Oracle 8 and 9i with custom extensions integrating to Oracle and SAP (Genesis) for manufacturing data flow. Web interface includes Crystal Reports and custom Apriso software. A re-implementation of the backup system was done to improve restoration time and reliability. Further integration with control systems required PLC programming.

Lotus Notes Administrator (Email)

Primary email administrator for over two-hundred users. Server upgrade, quarterly patching, updated server virus scanning capacity. Performed a capacity assessment and upgraded system. Complete audit of users and implement quotas. Server side compression of database and network implemented.

Network Administrator

Management of Cisco and Nortel switches and routers. Added GUI interface to allow for simplified administrator using the Nortel Device Manager using SNMP in read-only and read-write. Allow other non-technical people access to read-only views of network devices. Fully documented existing network infrastructure for ITIL infrastructure documentation. Added a new wiring closet for plant expansion.

Desktop Support

Provide desktop support for 140 desktop and laptop systems. Includes monthly patching, software installation, and common application support.

Administered Sun Solaris and IBM AIX systems for all 58 North Carolina community colleges. Took initiative on outreach and training — drove to college locations to troubleshoot onsite, coached local administrators on standardized deployments, and worked with leadership on improving implementations across the system. Outages of these platforms impacted payroll, student records, registration, and other critical campus operations. Built Perl and shell automation that reduced manual effort for all 58 local administrators. Led the Solaris 9 upgrade project across 58 heterogeneous college systems while maintaining services to all users and students.

UNIX Systems Programming and Administration